Breaking: OpenAI's GPT-5.5 Powers Codex on NVIDIA GB200 NVL72 — Internal Rollout Yields Dramatic Efficiency Improvements

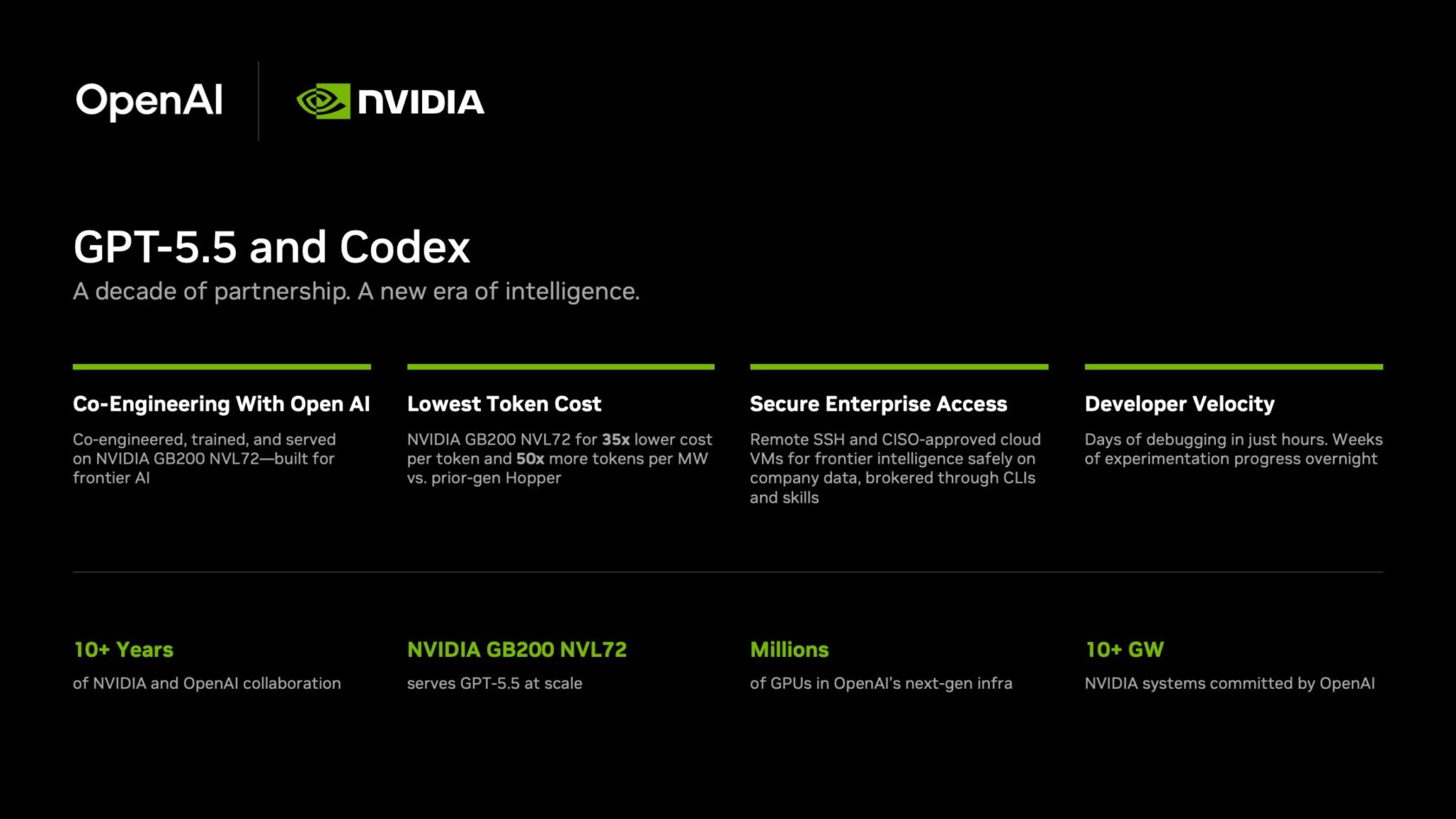

NVIDIA has deployed OpenAI's latest frontier model, GPT-5.5, to power its Codex agentic coding platform, running on NVIDIA's own GB200 NVL72 rack-scale systems. Over 10,000 employees across engineering, product, legal, marketing, finance, sales, HR, operations, and developer programs are now using GPT-5.5-powered Codex, reporting “mind-blowing” and “life-changing” results.

Debugging cycles that once took days are now closing in hours. Experimentation that required weeks is turning into overnight progress in complex, multi-file codebases. Teams are shipping end-to-end features from natural-language prompts with stronger reliability and fewer wasted cycles.

Quantifiable Gains on NVIDIA's Proprietary Hardware

The GB200 NVL72 systems deliver 35x lower cost per million tokens and 50x higher token output per second per megawatt compared with prior-generation systems, making frontier-model inference viable at enterprise scale, according to NVIDIA. The economic advantage is enabling broad internal adoption.

“Let’s jump to lightspeed. Welcome to the age of AI.” — Jensen Huang, NVIDIA founder and CEO, in a company-wide email urging all employees to use Codex.

Huang's directive underscores the strategic importance of the rollout. Engineers have had access to GPT-5.5 through Codex for a few weeks, and the measurable gains are already reshaping workflows.

Background: A Decade of Full-Stack Collaboration

The GPT-5.5 launch and Codex rollout reflect more than 10 years of collaboration between NVIDIA and OpenAI. The partnership began in 2016, when Huang hand-delivered the first NVIDIA DGX system to OpenAI. Since then, the companies have worked closely on AI infrastructure and model optimization.

NVIDIA's work extends beyond internal use. The company collaborates with every frontier model company to accelerate AI agents and help partners build the world's best, lowest cost, and most power-efficient models.

Enterprise Security: Zero-Data Retention and Sandboxed Agents

To ensure secure operation within enterprise environments, Codex supports remote Secure Shell (SSH) connections to approved cloud virtual machines. This allows agents to work with real company data without exposing it externally. NVIDIA IT rolled out cloud VMs for every employee, providing a dedicated sandbox with full auditability.

A zero-data retention policy governs the deployment, and agents access production systems with read-only permissions through command-line interfaces and Skills—the same agentic toolkit NVIDIA uses for automation workflows across the company. Users control the Codex agent from a familiar user interface.

What This Means: The Age of AI Agents in Enterprise Knowledge Work

This deployment signals a major shift in enterprise AI usage. AI agents are moving beyond coding assistance into knowledge work—processing information, solving complex problems, generating ideas, and driving innovation. The combination of GPT-5.5's frontier capabilities and NVIDIA's specialized infrastructure makes this practical at scale.

The dramatic improvements in debugging speed and experimentation turnaround suggest that AI agents can now handle the most complex, multi-file codebases with reliability. For industries dependent on software development, this could compress product cycles from months to weeks.

NVIDIA's internal success with GPT-5.5-powered Codex also sets the stage for broader commercial availability. As frontier models become more economical on NVIDIA hardware, other enterprises may follow suit, accelerating the adoption of agentic AI across sectors.