Building Context-Aware Intrusion Detection: A Step-by-Step Guide to Implementing SnortML and Agentic AI

Introduction

Traditional intrusion detection systems (IDS) like Snort have long relied on signature-based methods—matching network traffic against known patterns of malicious activity. While effective against known threats, this approach struggles with novel attacks, encrypted traffic, and subtle anomalies. The evolution of SnortML and agentic AI shifts the paradigm from “does this match a known signature?” to “does this behavior make sense in context?” This guide walks you through the practical steps to modernize your IDS by integrating machine learning and autonomous agents, enabling your sensors to think and adapt in real time.

What You Need

- A running Snort 3 instance (or newer) with plugin support.

- Access to a machine learning framework (e.g., PyTorch, TensorFlow, or Scikit-learn).

- Historical network traffic data (pcap files or flow logs) for training.

- Compute resources: GPU recommended for training, at least 8 GB RAM for inference.

- Agentic AI middleware (e.g., custom Python scripts or frameworks like Ray or LangChain).

- Basic proficiency in Python, shell scripting, and network protocols.

- A test environment to validate changes without disrupting production.

Step-by-Step Implementation

Step 1: Assess Your Current Signature-Based Baseline

Before introducing ML, document your existing Snort configuration: enabled rules, performance metrics, and common false positives. Identify gaps—threats that bypass signatures or generate excessive alerts. This baseline helps you measure improvement and prioritize which behaviors to model. Use tools like snort -T to test your config and tcpdump to sample traffic.

Step 2: Prepare Your Training Data

Machine learning requires clean, labeled data. Collect benign and malicious traffic from your network over a representative period. Use tools like Zeek to extract features (flow duration, packet sizes, protocol flags). Label the data using existing Snort alerts, threat intelligence feeds, or manual review. Store in a format like CSV or Parquet. Aim for at least one million samples for robust anomaly detection.

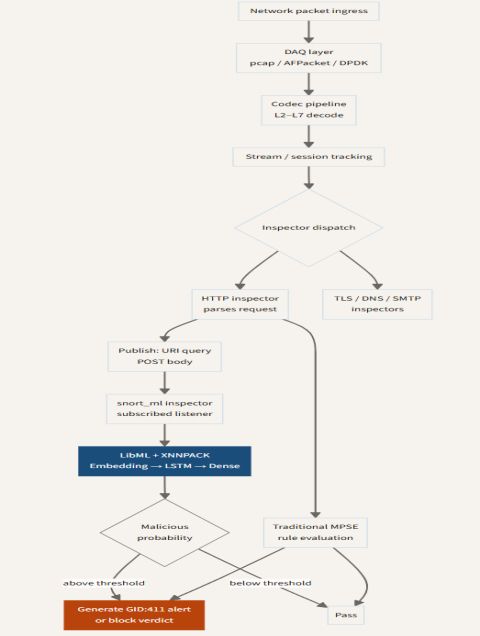

Step 3: Integrate SnortML Plugin

SnortML extends Snort 3 with an official machine learning plugin. Install it via the Snort package manager or compile from source. Enable the plugin in snort.lua: machine_learning = { model = 'path/to/model.onnx', threshold = 0.8 }. The plugin runs inference on each packet, outputting a confidence score. Test with a simple pre-trained model (e.g., a random forest classifier for port scans) to verify connectivity.

Step 4: Train a Context-Aware Model

Design your ML model to answer “does this traffic make sense in context?” rather than “is it malicious?” Use techniques like autoencoders (unsupervised anomaly detection) or transformer networks (sequence modeling). Train on your prepared data, optimizing for low false positive rate. Validate on a held-out test set. Convert the final model to ONNX format for SnortML compatibility.

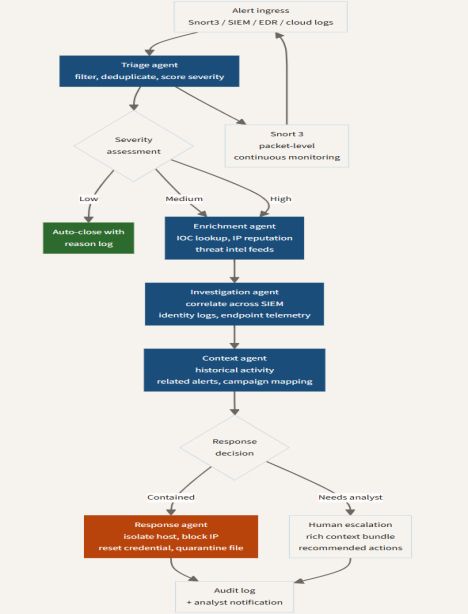

Step 5: Deploy Agentic AI for Autonomous Response

Agentic AI introduces autonomous agents that can correlate SnortML outputs with additional context (user identity, time of day, historical baselines) and take action—such as dynamically blocking an IP, reconfiguring firewall rules, or raising a human-priority alert. Implement a lightweight agent using event-driven architecture. Example: a Python script that subscribes to Snort’s unified2 output, queries an LLM for justification, and executes a iptables command if confidence exceeds 0.95 and context matches unusual hours.

Step 6: Establish Feedback Loop and Continuous Learning

Set up a pipeline to capture SnortML outputs, human confirmations, and incident outcomes. Retrain the model periodically (e.g., weekly) using newly labeled data. Use agentic AI to detect model drift—when anomaly scores gradually change, trigger a retraining job. This ensures your IDS evolves with your network and threat landscape.

Tips for Success

- Start small: Pilot SnortML with one model on a non-critical subnet. Monitor false positive rates carefully—context-aware systems can initially overfit to skewed training data.

- Emphasize explainability: Use SHAP or LIME to understand why your model flagged a flow. This builds trust and aids troubleshooting.

- Combine signature and ML: Run Snort’s signature engine in parallel with SnortML. Use ML to prioritize alerts from signatures, or to catch false negatives.

- Secure the agent: Agentic AI that takes autonomous actions must be sandboxed with strict permissions. Always log decisions and allow manual override.

- Monitor performance: ML inference adds latency. Profile with

perfand consider hardware acceleration (e.g., Intel QAT) if throughput drops below 1 Gbps. - Invest in data hygiene: Garbage in, garbage out. Regularly audit your training data for bias (e.g., overrepresented attack types).

- Stay current: SnortML and agentic AI are rapidly evolving. Follow the Snort blog and community repos for plugin updates.

By following these steps, you transform your IDS from a rigid pattern matcher into an intelligent, context-aware system that actively adapts to new threats—a true thinking sensor.